Data Engineering

Build scalable, reliable, and high-performance data ecosystems that help organizations unlock insights, improve decision-making, and accelerate digital transformation initiatives.

We help businesses design, modernize, and manage data platforms capable of handling structured, unstructured, and real-time data across multiple systems and cloud environments.

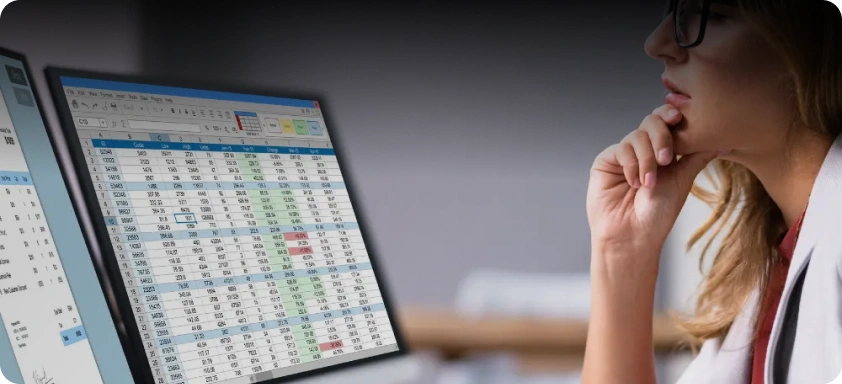

Scalable Data Engineering Services for Analytics, Automation, and Enterprise Transformation

Our data engineering services enable organizations to collect, process, store, and analyze large volumes of data efficiently. From data pipelines and warehousing to analytics and AI-ready architectures, we create robust foundations for data-driven businesses.

Whether you are modernizing legacy systems or building a cloud-native data platform, our engineers help streamline your entire data lifecycle.

Scalable Data Pipelines

Develop reliable ETL and ELT pipelines that automate data collection, transformation, and synchronization across enterprise applications, APIs, cloud systems, and databases for faster and accurate business insights.

Real-Time Data Processing

Enable live analytics and event-driven architectures with real-time data streaming solutions that support operational intelligence, monitoring, automation, and faster decision-making capabilities.

Data Warehousing Solutions

Create centralized and optimized data warehouses that improve reporting accuracy, business intelligence, analytics performance, and enterprise-wide access to structured organizational data.

AI-Ready Data Architecture

Prepare clean, governed, and scalable data foundations that support machine learning, predictive analytics, LLM applications, and intelligent automation initiatives across business operations.

We help building reliable, scalable, and intelligent data ecosystems designed to support automation, analytics, and AI-driven business operations.

Why Choose Us For Data Engineering?

We work with enterprise-grade databases including SQL Server, MySQL, PostgreSQL, MongoDB, Oracle, and Redis to build scalable, secure, and high-performance data infrastructures for modern business applications.

Our engineers leverage technologies such as Python, Apache Spark, Kafka, Airflow, Hadoop, and dbt to develop robust ETL pipelines, real-time processing systems, and large-scale data transformation workflows.

We build cloud-native data ecosystems using Snowflake, Azure Data Factory, Azure Synapse, Databricks, AWS Redshift, and BigQuery to enable scalable analytics, centralized reporting, and intelligent data operations.

We utilize modern analytics and visualization platforms including Power BI, Tableau, Looker, Elasticsearch, and Kibana to help organizations gain actionable insights through interactive dashboards and reporting solutions.

Our Case Study

Turning challenges into measurable outcomes.

FAQs: DevOps

What are data engineering services?

Data engineering services involve designing, building, and managing systems that collect, process, transform, and store data for analytics, reporting, and AI applications.

Which technologies do you work with?

We work with SQL Server, Python, Snowflake, Databricks, Azure Data Factory, Apache Spark, Kafka, Power BI, PostgreSQL, MySQL, and multiple cloud platforms.

Can you modernize legacy data systems?

Yes. We help organizations migrate and modernize legacy databases, reporting systems, and data infrastructure into scalable cloud-native platforms.

Do you support real-time data processing?

Yes. We develop real-time streaming and event-driven architectures for live analytics, monitoring, and operational intelligence solutions.

Can your data engineering solutions support AI initiatives?

Absolutely. We create AI-ready data architectures optimized for machine learning, predictive analytics, LLM applications, and intelligent automation systems.

Do you provide dedicated data engineering resources?

Yes. We offer dedicated data engineers, ETL developers, SQL developers, BI specialists, cloud data engineers, and complete data engineering teams based on project requirements.